Liquid Cooling Failure

Modern systems make this less catastrophic than it could have been.

I’ve had dozens of computers over the last 40-some years since I purchased my first gen IBM PC in 1981. That system – and many of its successors – didn’t produce enough heat to require cooling fans. As systems got faster they generated more heat and required CPU and case fans to keep them cool.

Many of those fans failed and I learned to keep a supply of appropriate sizes of CPU fans and case fans from 80mm on up to 200mm. It’s always better to pull one out of the drawer than it is to drive to the computer store or wait for an on-line order to arrive.

Fans usually fail one of two ways; quietly or noisily. Noisy failures are due to worn bearings and can sound like a rattle or low-pitched thrumming. Quiet failures are due to the buildup of gunk which, according to The Collaborative International Dictionary of English, is “any thick gooey and messy substance.” The gunk builds up inside the fan bearing until the fan simply can’t rotate and the airflow stops.

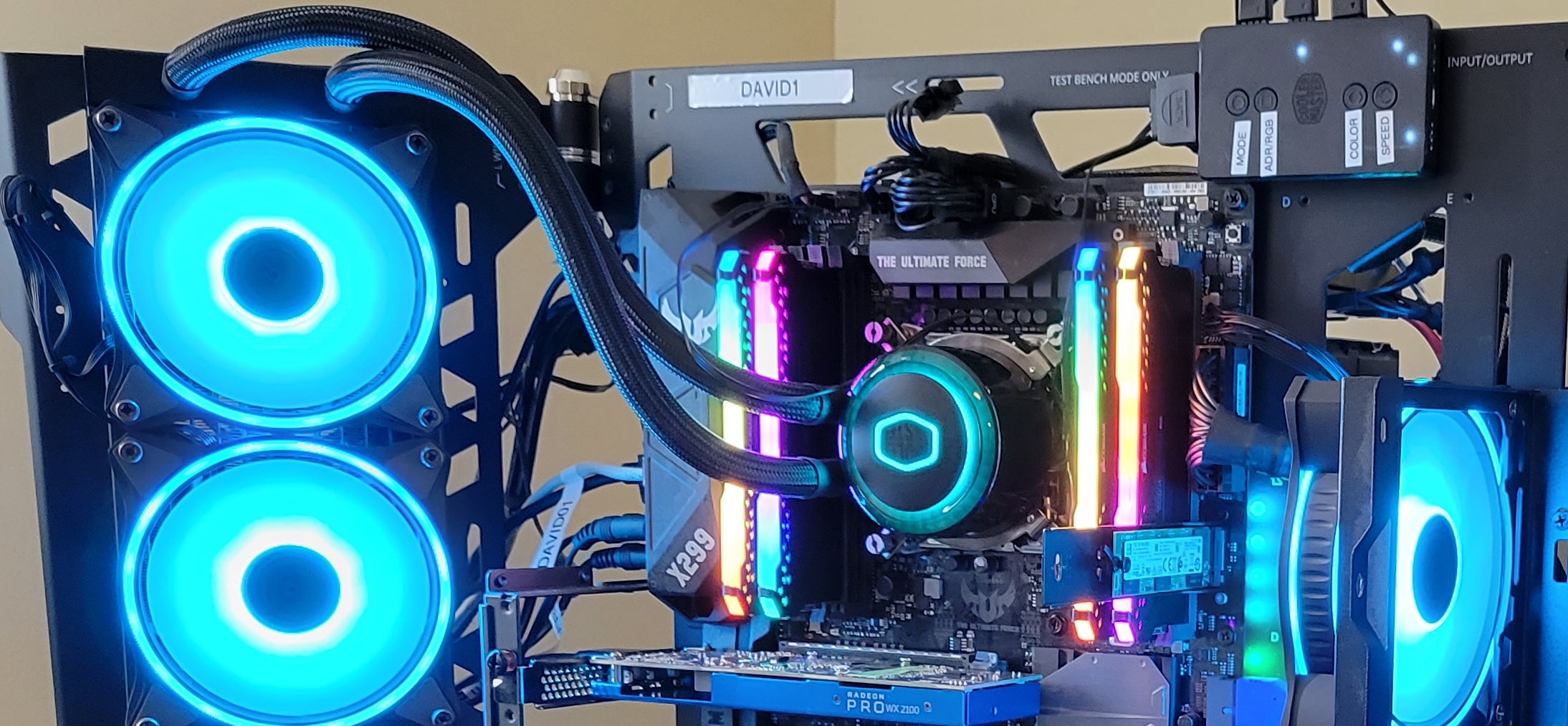

I started using liquid cooling in several of my newer systems over the last few years and none had ever gone bad. Until this week when I experienced my first.

How It Started

One of my older tower computer systems began issuing sounds like a worn fan bearing. I could tell from feeling the vibrations that I could hear and feel at the top of the case that the top 200mm fan was failing and needed replacement. Having put that off a few weeks I finally decided to replace the fan a few days ago.

I opened the case and, in what is my usual preventative maintenance (PM) procedure when opening a case, I looked for signs of additional imminent failures. The front 200mm fan was a bit sloppy in its bearing and that’s always a bad sign even if it’s not yet making noise. The rear 120mm fan resisted rotation by finger and stopped immediately. So that bearing was full of gunk and would soon fail noiselessly. After cleaning the accumulation of dust and debris from the interior of the case, I replaced all three of those fans and turned the computer on. It ran fine but seemed sluggish. So I put it under a heavy CPU load and looked at htop to view CPU speed and temperature.

I was shocked to see all 16 CPUs (8 cores) running at about 800MHz instead of the rated 4.7GHz. All cores were showing temperatures of around 99-100 Celsius. These symptoms are typical of CPU cooling failure.

This system used a Corsair H60 all-in-one (AIO) liquid cooling unit that had been working well for several years. It was one of my first liquid cooling installations and it kept the CPU temperatures to around 65-72 degrees (C). It ran hard because I kept the computer running full blast 24x7x365. I used the ancient touch test and determined that the CPU cooling head was quite hot and that the liquid exit tube from the cooling head was warm but only for a few centimeters. It should have been warm for its entire length.

Clearly, the pump in the cooling head was just barely working. Even as I was pondering this, the pump finally disintegrated with a series of sounds eerily like an internal combustion engine throwing a rod. It did not spring a leak. The computer kept running at its much reduced speed.

The Explanation

Another symptom of this was evident in UPS wattage surges I observed. I use UPS systems on all of my hosts to ensure that short power outages don’t disrupt them. All of the UPS devices have displays that show power usage in Watts. The UPS powering this system and one other plus a KVM and a couple other small devices would show almost 650 Watts for a couple minutes when power consumption would drop to about 250 Watts. This was due to maximum temperature throttling built into the hardware.

As the cooling system began to fail the CPU would heat up to the 100 degree Celsius maximum temperature limit at which point the system would throttle itself down to a minimum CPU speed which would allow it to cool down to a lower temperature. At that point the system would throttle back up and the cycle would start over.

This is not a good thing.

The Fix

In this case the cooling pump itself was the root cause of the problem. That was made quite clear by the symptoms of its final failure. The radiator fan was still functioning properly and exhibited no signs of potential failure.

I replaced the AIO liquid cooler with a 220 Watt air cooler that I had on hand. I always have spare parts on hand.

Everything is running smoothly now. The CPU temperatures are stable in the high 50’s to low 60’s. The CPUs all run steadily at 4.3GHz. The UPS shows stable power consumption.

Conclusions

The failing cooling system made itself known in a number of symptoms.

This event shows that device failures can be complex and present interesting symptoms. Some of those symptoms can be observed in multiple ways using multiple human senses. I first heard the noise the top fan was making which alerted me to a problem. I used touch to feel the vibrations that indicated the top exhaust fan was failing, the AIO cooling block was extremely hot, and that the coolant tubing was warm for only a small distance at its exit point. I could also see the display on the UPS indicating a power surge cycle. Using tools like glances and especially htop, I could see the temperature and CPU speed fluctuations.

It is rather unusual for multiple cooling devices to fail simultaneously. Fortunately I had intended to replace the fan that was exhibiting obvious signs of impending failure. Coupled with my penchant for preventative maintenance, that allowed me to replace all of the case cooling fans before they actually failed. It also led me to the ultimate disintegration of the CPU cooler.

We can also see the efficacy of modern motherboard and CPU design in the use of throttling. This prevented a meltdown of the CPU and the probable complete destruction of it and the motherboard. Modern PC systems are built to be quite resilient as this failure shows.