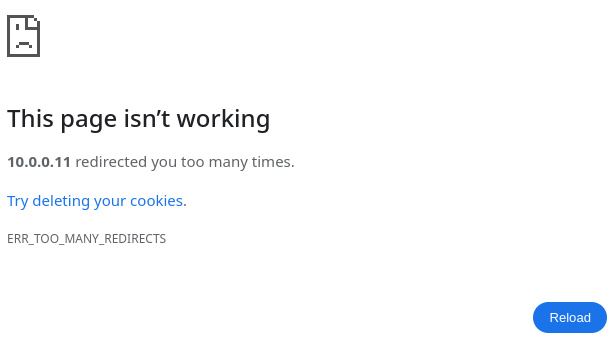

If you manage a website, you know that sometimes things can get a little messed up. You might remove some stale content and replace it with a redirect to other web pages. Or you might update a website to require a username and password to access certain pages. After making enough changes, you might discover that your website has stopped working the way you want it to. For example, you might see an error like this in your browser to indicate that the website is redirecting too many times:

One way to debug this is by using the wget command line tool. While you might already know how to use wget to fetch individual web pages or files from websites, you can also use wget as a handy tool to keep in your system adminstrator “toolkit” to help troubleshoot web server errors.

For example, when I need to debug a website, I rely on the -S option to show all server responses. When using wget for debugging, I also use the -O option to save the output to a file in case I need to review it later. Here is how I use these options with wget to debug a website issue.

A problem website

Let’s say we have updated a website to add a new feature to edit content. But when we access the website, we get the message from earlier in this article about too many redirects.

The first step to debugging the problem is to understand what is happening behind the scenes. The web browser doesn’t provide any details about where it has been redirected to so we need to use wget -S to get more detail about how the client is being bounced around the website:

$ wget -S http://10.0.0.11/edit/

--2024-04-05 11:30:57-- http://10.0.0.11/edit/

Connecting to 10.0.0.11:80... connected.

HTTP request sent, awaiting response...

HTTP/1.1 302 Found

Date: Fri, 05 Apr 2024 16:30:57 GMT

Server: Apache/2.4.58 (Fedora Linux)

X-Powered-By: PHP/8.2.17

Location: /login

Content-Length: 0

Keep-Alive: timeout=5, max=100

Connection: Keep-Alive

Content-Type: text/html; charset=UTF-8

Location: /login [following]

--2024-04-05 11:30:57-- http://10.0.0.11/login

Reusing existing connection to 10.0.0.11:80.

HTTP request sent, awaiting response...

HTTP/1.1 301 Moved Permanently

Date: Fri, 05 Apr 2024 16:30:57 GMT

Server: Apache/2.4.58 (Fedora Linux)

Location: http://10.0.0.11/login/

Content-Length: 231

Keep-Alive: timeout=5, max=99

Connection: Keep-Alive

Content-Type: text/html; charset=iso-8859-1

Location: http://10.0.0.11/login/ [following]

--2024-04-05 11:30:57-- http://10.0.0.11/login/

Reusing existing connection to 10.0.0.11:80.

HTTP request sent, awaiting response...

[..]

Location: /edit [following]

--2024-04-05 11:30:58-- http://10.0.0.11/edit

Reusing existing connection to 10.0.0.11:80.

HTTP request sent, awaiting response...

HTTP/1.1 301 Moved Permanently

Date: Fri, 05 Apr 2024 16:30:58 GMT

Server: Apache/2.4.58 (Fedora Linux)

Location: http://10.0.0.11/edit/

Content-Length: 230

Keep-Alive: timeout=5, max=81

Connection: Keep-Alive

Content-Type: text/html; charset=iso-8859-1

Location: http://10.0.0.11/edit/ [following]

--2024-04-05 11:30:58-- http://10.0.0.11/edit/

Reusing existing connection to 10.0.0.11:80.

HTTP request sent, awaiting response...

HTTP/1.1 302 Found

Date: Fri, 05 Apr 2024 16:30:58 GMT

Server: Apache/2.4.58 (Fedora Linux)

X-Powered-By: PHP/8.2.17

Location: /login

Content-Length: 0

Keep-Alive: timeout=5, max=80

Connection: Keep-Alive

Content-Type: text/html; charset=UTF-8

Location: /login [following]

20 redirections exceeded.I’ve omitted most of the output from this command, but you can already see that accessing /edit redirects to /login, which then immediately redirects back to /edit, and so on. This is an endless loop, and wget will automatically exit after 20 iterations.

By seeing these details, the webmaster can make a determination that the login system is not working properly, and focus their attention on the /login page instead of trying to debug a (working) /edit page.

Saving output

In this case, the website redirects too many times, so it never produces a valid web page. But if you had an example where the web page printed an error that you needed to save for later, you can dump a copy of a web page to a file.

Let’s say you manage a website with an RSS feed, and the feed has stopped working. The feed displays an error code that you need to debug the problem, so the first step is to get a copy of the RSS feed. Use the -O option to save the output to a file:

$ wget -O t.rss http://10.0.0.11/rss/

--2024-04-05 11:44:09-- http://10.0.0.11/rss/

Connecting to 10.0.0.11:80... connected.

HTTP request sent, awaiting response... 200 OK

Length: unspecified [text/html]

Saving to: ‘t.rss’

t.rss [ <=> ] 273 --.-KB/s in 0s

2024-04-05 11:44:09 (26.2 MB/s) - ‘t.rss’ saved [273]This saves a copy of the feed from http://10.0.0.11/rss/ to the temporary file named t.rss:

$ cat t.rss

<?xml version="1.0" encoding="UTF-8" ?>

<rss version="2.0">

<channel>

<title>Latest News</title>

<description>A summary of the latest news from XYZ.</description>

<link>http://example.com/news/</link>

*ERROR: Cannot read news items (ERR 8675309)

</channel>

</rss>With this information, we can see that the RSS failed with the error code 8675309, which is likely an important error number on the system that produces the news feed. As the website administrator, you would then have the information you need to track down and fix the error.

Other useful options to ‘wget’

The wget command has lots of other useful options. Use man wget to read the online manual to learn what other options might be useful to you. For example, the -o (lowercase) will save the wget command output to a file, rather than printed to the screen. And the -T option can set a timeout, after which wget will stop waiting on the web server.